Evaluating recommender systems: survey and framework

Framework for EValuating Recommender systems (FEVR)

Framework for EValuating Recommender systems (FEVR)Abstract

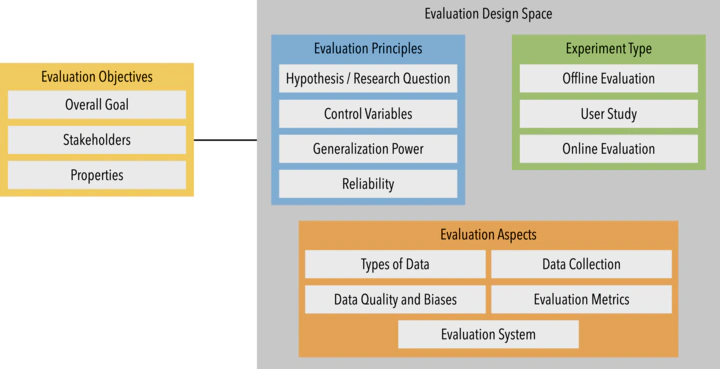

The comprehensive evaluation of the performance of a recommender system is a complex endeavor: many facets need to be considered in configuring an adequate and effective evaluation setting. Such facets include, for instance, defining the specific goals of the evaluation, choosing an evaluation method, underlying data, and suitable evaluation metrics. In this paper, we consolidate and systematically organize this dispersed knowledge on recommender systems evaluation. We introduce the “Framework for EValuating Recommender systems” (FEVR) that we derive from the discourse on recommender systems evaluation. In FEVR, we categorize the evaluation space of recommender systems evaluation. We postulate that the comprehensive evaluation of a recommender system frequently requires considering multiple facets and perspectives in the evaluation. The FEVR framework provides a structured foundation to adopt adequate evaluation configurations that encompass this required multi-facettedness and provides the basis to advance in the field. We outline and discuss the challenges of a comprehensive evaluation of recommender systems, and provide an outlook on what we need to embrace and do to move forward as a research community.

PDF

Cite

Project

DOI

ACM Author-izer

JIF 2022: 16.6 CORE 2020: A* JOURQUAL3: B VHB 2024: A

Women in RecSys Journal Paper of the Year Award 2023, Senior category